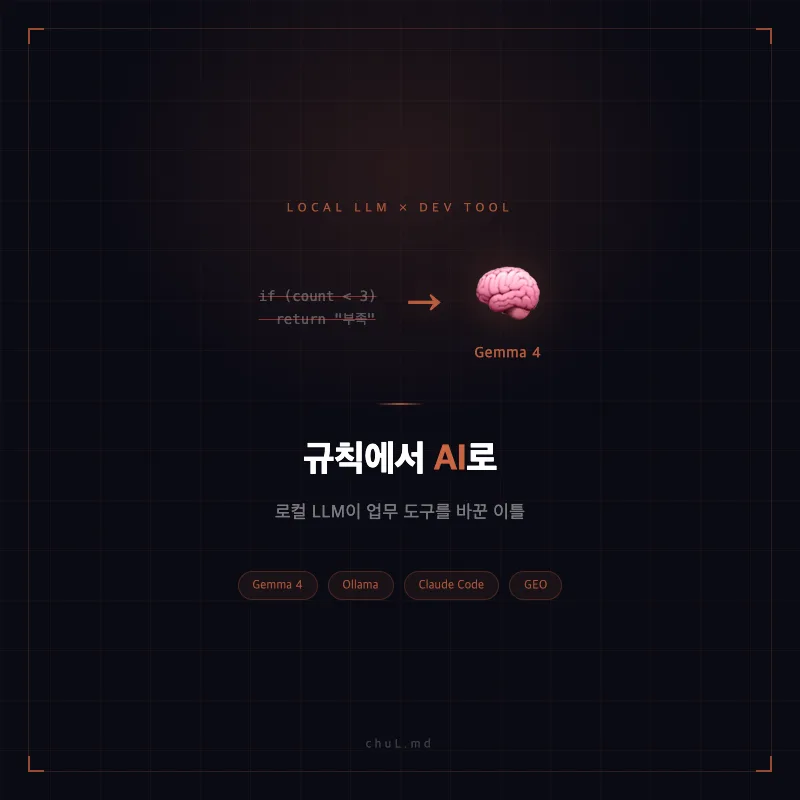

If your content analysis tools have been stuck giving vague feedback like 'Needs Improvement,' it's time to consider a local LLM-powered tool that offers specific guidance: 'Here's How to Fix It.' Adopting local LLMs like Google's Gemma 4 allows you to analyze sensitive internal data without API costs, while context-aware AI elevates your content quality to the next level.

Why Local LLM Adoption is Crucial for Content Analysis (2026)

Traditional rule-based content analysis tools often fall short by focusing on simple keyword frequency or pattern matching, failing to grasp the nuances of context. For instance, a rule stating 'keyword appears less than 3 times, so it's insufficient' doesn't truly enhance content quality. Applying a one-size-fits-all approach to diverse content types like press releases, social media posts, or expert profiles diminishes the tool's reliability. By integrating local LLMs, such as Google's publicly released Gemma 4, you can perform a deeper analysis that aligns with AI search engine preferences. This includes understanding content structure, E-E-A-T (Experience, Expertise, Authoritativeness, Trust) signals, and entity recognition. Because these LLMs run directly on your machine, they offer a significant advantage for analyzing sensitive internal data without incurring API fees or risking data exposure.

How to Upgrade Content Analysis Tools with Local LLMs

Related Articles

Building a local LLM-powered content analysis tool starts with designing effective prompts tailored to your specific goals. For instance, you'll need to define JSON schemas for different functions like content diagnostics, profile coaching, or meta-tag generation, and then fine-tune the LLM to respond within these formats. The next step involves transitioning from your existing rule-based logic to integrating the LLM, typically via Ollama REST API calls. During this process, you might encounter technical challenges such as Gemma breaking JSON formatting, exhibiting extreme score variations, or causing parser errors due to quote issues in a JavaScript environment. These hurdles can be overcome through meticulous prompt engineering and adjustments to the API call logic. Comparing Gemma 3 (4B) and Gemma 4 (7B), we found that while Gemma 4 requires more processing time, it offers deeper analysis, identifying shortcomings like poor LSI keyword utilization or lack of supporting evidence for claims. Therefore, selecting an LLM that provides accurate and detailed feedback, rather than just speed, is more effective for improving content quality.

What Are the Expected Benefits of Local LLM Implementation?

Implementing local LLMs transforms content analysis from vague 'Needs Improvement' feedback to actionable 'Here's How to Fix It' recommendations. For example, you can receive personalized coaching like 'Your Experience signal in the E-E-A-T trust axis is weak; cite real-world examples or data' or 'The depth of expertise section is missing the disease-treatment-equipment mapping; add specific treatment statistics.' This goes beyond simple scoring, enabling precise identification of content weaknesses and actionable improvement strategies, leading to tangible quality enhancements. Furthermore, since all analysis occurs locally, your sensitive internal content remains secure, never transmitted to external servers. This provides peace of mind and eliminates the cost associated with API usage, allowing for unlimited analysis.

Are There Any Precautions for Local LLM Adoption?

While local LLM-based content analysis tools offer numerous advantages, it's essential to be aware of their limitations and potential pitfalls. Firstly, response times can be slower compared to cloud-based solutions. For instance, Gemma 4 might take around 47 seconds to generate analysis results, which could be a bottleneck for real-time collaborative workflows. Secondly, LLM outputs aren't always perfect. You might encounter occasional JSON formatting errors or significant score fluctuations based on minor prompt variations, indicating the system's sensitivity. Therefore, implementing automatic retry logic and viewing LLM outputs as supplementary resources rather than infallible truths is advisable. By understanding these limitations and developing appropriate mitigation strategies, local LLMs can become incredibly valuable tools for content analysis and improvement.

For more details, check the original source below.